Exploring Neural Cinematics

Challenge

Exploring Neural Cinematics began with a core question: How do you maintain full artistic control when working with diffusion models that naturally drift, hallucinate, or reinterpret scenes? The challenge was creating a pipeline that could honour precise composition, lighting, and spatial logic while still benefiting from the expressive strengths of AI. I needed a workflow that kept the human vision intact — not lost inside neural randomness.

Solution

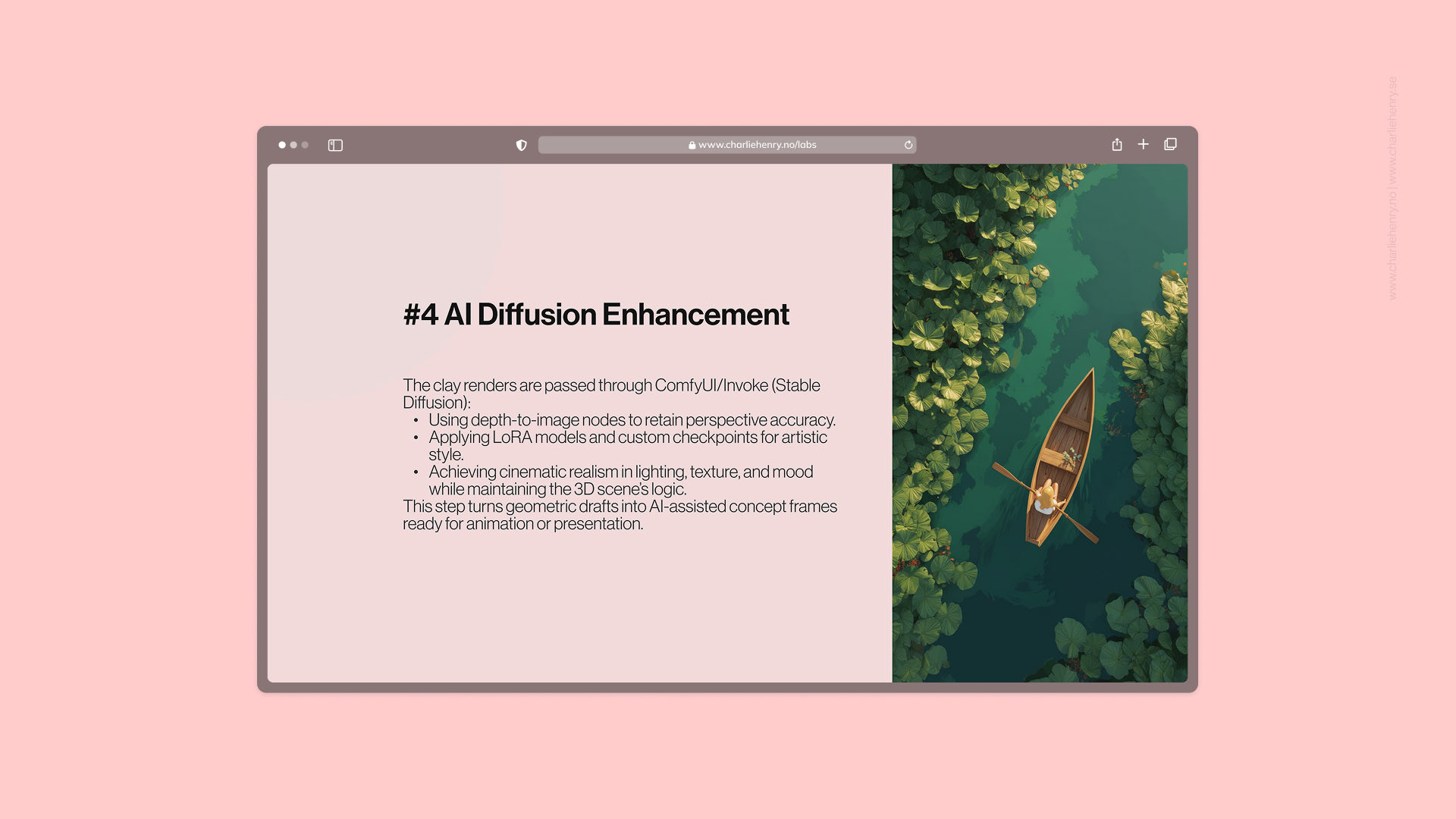

I developed a hybrid pipeline combining sketching, Blender blockouts, clay rendering, and depth-guided diffusion. Each scene began with a quick concept sketch, followed by a full 3D layout to define geometry, rhythm, and camera motion. These clay renders were then enhanced using custom ComfyUI/Invoke setups, LoRA styles, and depth-to-image conditioning to achieve cinematic realism while preserving the underlying structure. This allowed AI to extend my direction, not replace it — delivering consistent lighting, texture, and mood across frames.

Result

The outcome is a repeatable, film-grade neural cinematics workflow where human intent and machine creativity work in harmony. The project produced cohesive, emotionally controlled sequences that remain structurally accurate and visually expressive. By bridging manual design and AI enhancement, Exploring Neural Cinematics demonstrates a new approach to concept art and motion design — one where designers remain the authors of space, light, and story, while AI amplifies their vision.